What is MLOps?

MLOps is the practice of deploying and architecting machine learning solutions that can be automated and scalable within a company’s workflow. It is a concept that has emerged in the data science field over the past five years.

When it comes to the steps needed to get a machine learning model into production within a company’s architecture, the ML code is the tiniest sliver of the pie.

There are many questions that need to be answered, like:

- How do you do A/B testing across different subgroups?

- How do you do feature engineering across different datasets?

- How do you manage serving inference?

- How do you monitor all this and what should you do if the model goes down?

- How do you account for data drift?

The above is a small subset of what companies need to consider for an efficient and scalable MLOps solution, illustrating that the actual data science modeling code is pretty minuscule when compared to everything else needed to successfully run a model in production. By implementing MLOps architecture, teams of data engineers, data scientists, and DevOps engineers can operate in an extremely scalable and collaborative environment.

What is the difference between MLOps and DevOps?

A lot of people confuse DevOps and MLOps.

MLOps can be thought of as a newly evolved derivative of DevOps with the rise of data science models becoming more prevalent in the data world.

DevOps is a practice used to ease the pain points of software development by introducing version control on releases for products. MLOps does a similar thing by streamlining the development and testing process but centered around the entire AI/ML workflow.

Diagram 1.1: Pyramid of Data Science and MLOps Structure

Why use MLOps and What are the Benefits?

- Development Speed

- Standard processes are set in place for teams to operate in parallel

- Less time for onboarding and learning of the architecture

- Scalability

- Easily increase or decrease compute as demand changes

- Automated monitoring and deployment of models

- Collaboration

- Cross-disciplinary teams all working within one environment

- No data silos

- Data Governance

- Compliance with all regulated industry standards

- Use Snowflake as the secure single source of truth

- Repeatable Templates

- Adding and removing features are very simple

- Use Terraform and Kubernetes to easily deploy and introduce new resources

Why Use Data Science and MLOps with Snowflake?

- Single source of truth for data

- Unstructured, structured, and semi-structured data

- No gap between the data for experiment and models in production

- Governance

- Letting data scientists mask data that might be PII/PHI

- Object tagging and rolled-based access control (RBAC)

- Snowpark API

- Gives interface where data scientists to work in

- All data stays within your Snowflake environment

- Access third-party data

- No need for a team of data engineers to fetch data for your models

- You can use the data marketplace to get real-time, up-to-date data

- Automate feature engineering through streams and tasks

Use Case: Kubeflow with Snowflake

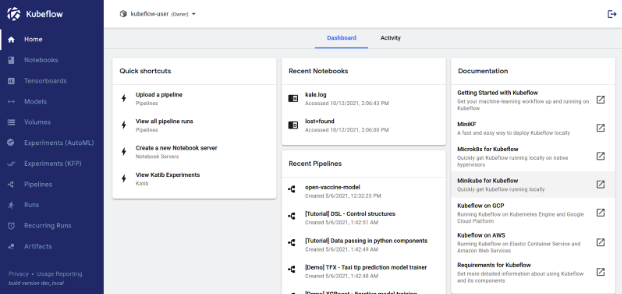

Kubeflow is a software platform for MLOps that allows data scientists and data engineers to create, deploy, and monitor ML models in a production setting. Kubeflow gives an easy-to-use UI for their users to seamlessly deploy and monitor Snowpark UDFs in a dev/production environment.

Picture 1.1: Screenshot taken from Kubeflow dashboard home

Some questions that might come up when deploying models into production with Kubeflow and Snowflake:

- How do you manage different versions of the model?

- Use Kubeflow to see different versions of the model, then extract the metadata and tag the model within the dashboard

- Why is Snowpark shown in batch inference but not online inference?

- Capabilities in the future for online inference

- Do Snowpark calculations use Snowflake memory?

- Everything in Snowflake is being translated to SQL; it is not in memory as it is in Spark

- Defining model UDF, are companies restricted to a template version or can they customize?

- You can bring your own models, packages, and libraries and deploy with Snowflake

- Snowpark supports 1500 packages from Anaconda; you can write code in the same way you write Spark or Python code

- Why choose Kubeflow?

- You can use other ML platforms of choice—Snowflake and Snowpark on the core data-learning capabilities

Scalable MLOps + Snowflake

There are many ways to deploy machine learning models into production in your company’s environment, with various options for software that specialize in doing that. This demo shows how complex deploying an ML model can be, the questions that needed to be answered before deployment, and an option for how to integrate your Snowpark models with Kubeflow.

Some information presented was discussed at a recent Snowflake Summit session titled: Building a Foundation for Scalable MLOps with Snowflake. This session was presented by Susan Devitt, Data Cloud Architect – GSI Snowflake, and Chase Ginther, Data Science & Machine Learning Platform Architect – Snowflake.

Additional resources on Snowflake and Kubeflow can be found here: