Data volume is growing exponentially. It’s a phrase we hear again and again across industries, but nowhere is this mantra more relevant than in healthcare. The ability to capture and store a patient’s medical information across platforms, insurers, and providers is rapidly becoming an essential function of care. For those who deal with claims data, that kind of visibility and security can feel like a tall order, but with the right approach and tools, it’s no longer out of reach.

This article will offer a lens into data science applications that make it possible to use healthcare claims data to improve patient outcomes and decrease hospital operations costs. To illustrate the impact of these practices, we’ll walk through a hospital care scenario that revealed the efficacy of heart procedures across a broad patient data set, accounting for numerous demographic, lifestyle, and medical variables.

Hospitals are required to record specific details about every care encounter, including details on diagnoses, procedures and how specific charges were distributed among involved parties. The granularity of this data opens up opportunities to create robust models that can predict diagnoses, recognize at-risk groups, and ultimately save patients and hospitals money.

Despite the potential for large dividends, there appears to be less fanfare around data science in healthcare. Claims data is bulky, difficult to read and requires rigorous cleaning, which all add to the complexity of analysis. This piece will discuss specific claims data challenges, the rewards that follow, and how cloud platforms can help bridge the gap between the two.

Tough to Work On, Large Rewards

Hospital claims or encounter data is required to be detailed in order to line up with the requirements of insurance providers, and this enables a broad range of analytics opportunities. Health encounter data includes details on the patient (demographic data), as well as detailed codes on diagnoses and procedures.

On a micro scale, analysis of what kind of individual is more likely to receive a diagnosis or need a procedure could improve early detection of cancers and reduce costly procedures for patients. On the macro scale, health encounter data could be converted to panel data using repeat patients over time to better forecast when demand for procedures are higher or lower during the year.

These applications can also be applied to costs. Discovering conditions early that would otherwise require time and cost intensive procedures saves hospitals money and keeps utilization down. Speaking again to the macro scale, analysts could convert health encounter data to panel or time series datasets and use this data to forecast hospital load and avoid shortages.

The initial rush on hospitals during the COVID-19 pandemic is a great example of why using data to forecast demand can save lives and money for hospitals. Analysis prior to the COVID-19 outbreak placed the likelihood of a global pandemic as a low probability, so probabilistic forecasts didn’t account for high hospital utilization combined with shortages of basic personal protective equipment (PPE).

As a consequence, hospitals were overloaded and understaffed. This not only impacted access to hospital beds for at-risk individuals with COVID-19, but also delayed important procedures and diagnoses for individuals with more traditional conditions. Properly utilizing healthcare claims data can enable hospitals to forecast how these factors change in relation to one another.

Modern Challenges with Analyzing Claims Data

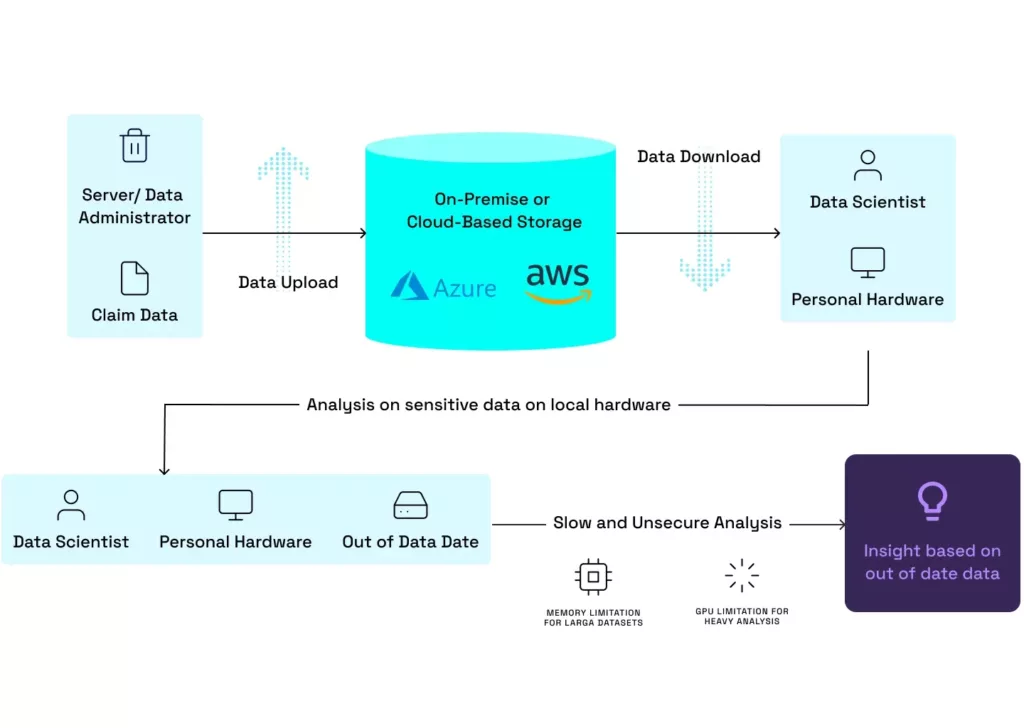

Traditionally, when we think about the workflow of a data scientist or analyst who gains insight from data, we imagine the analyst downloading a point in time version of the data, writing and executing code on a local machine, and transforming these results into a consumable format. Figure A illustrates this process and the many limitations introduced by integrating claims data. Given its sensitive nature, allowing analysts to download raw data to personal computers introduces significant data security risks.

The sheer size of claims data also introduces new challenges to hardware load for analysts. The size of most claims datasets exceeds the amount of ram and storage capacity of most consumer machines, and for heavy analysis, a more powerful GPU can become a must. Even if analysts jump these hurdles to analysis, their results will likely be out of date and difficult to distribute due to both their size and the frequent updates to claims as new care encounters take place.

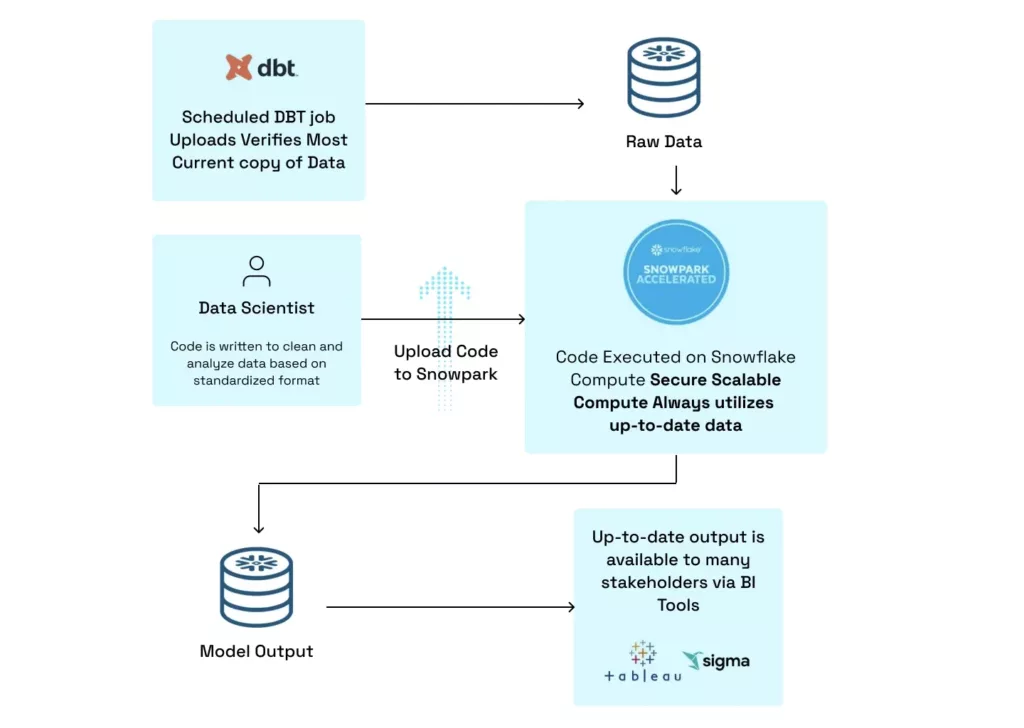

Fortunately, new tools offered by Snowflake allow healthcare providers to carry out traditional data science work on a secure platform with scalable compute. These new features bypass all of the bottlenecks that analysts encounter when working with claims data locally. Figure B indicates how analysts can push their code to a Snowflake UDF, where it is executed on Snowflake’s compute engine utilizing the most recent copy of the data.

After analysis is completed, results can easily be written to a separate Snowflake table where it can be accessed using any visualization tool with a Snowflake connector. Taking advantage of these tools, analysts can be sure they’re all working with the most up-to-date version of their data and that there is no risk of sensitive patient data leaving Snowflake. Recent updates to Snowpark enabled analysis to run increasingly demanding models within Snowflake, expanding this use case to memory and gpu heavy model training.

A final advantage of this workflow is that it enables more members of an organization access to results of analysis in their tools of choice. Previously, an analyst might have to run a model and transfer the data manually to a cloud storage solution, where another analyst has to display the data in an understandable manner for key decision makers. Utilizing the workflow in Figure B enables analysts to simply build a model, have the results written to Snowflake, and then anyone in the organization can access them.

The final section of this article will detail an application of this system to illustrate how a hospital utilizing claims data can save itself and its patients discomfort and money.

Forecasting Efficacy of Costly Procedures

A common cause of heart failure among patients is the presence of a blocked or clogged artery. These plaque build ups restrict blood flow to the heart and can cause chest pain or raise the likelihood of heart failure if there is improper blood flow. Two common procedures, coronary artery bypass graft surgery (CABG) or percutaneous transluminal coronary angioplasty (PTCA), offer methods for clearing blockages (PTCA) or rerouting blood flow (CABG).

While these procedures might be effective at reducing pain and reducing heart failure, they come at a cost to patients in terms of procedure time, recovery time and financial cost. For hospitals, these procedures take up hospital beds and occupy the time of skilled doctors who could otherwise focus on more critical patients. Claims data offers the opportunity to quantitatively measure who will and will not benefit from these procedures.

When deciding whether or not to have an invasive procedure, patients weigh the costs in terms of pain and recovery time against the potential benefit to their health or lifespan. It is always tempting to take the invasive procedure that takes three hours as opposed to lifestyle changes or accepting a potential loss in lifespan.

Many of these procedures may have no effect on health and net their recipients only a painful recovery and further risk of death. As an example, a coronary artery bypass graft surgery (CABG) involves the removal and placement of an artery from the leg into the heart to increase blood flow and decrease risk of heart attack. An individual might be tempted to paint this surgery as an easy, fast solution to a common problem faced by patients.

But the reality is that recovery time for this procedure is around 12 weeks and patients are fatigued for at least six weeks, and there is an increased risk of heart attack for the first month after the procedure. If physicians are overzealous in their prescription of these costly procedures (monetarily and for patient health), and they make no difference, then patients are better off changing their lifestyle habits or accepting a shorter lifespan and prioritizing the time they have.

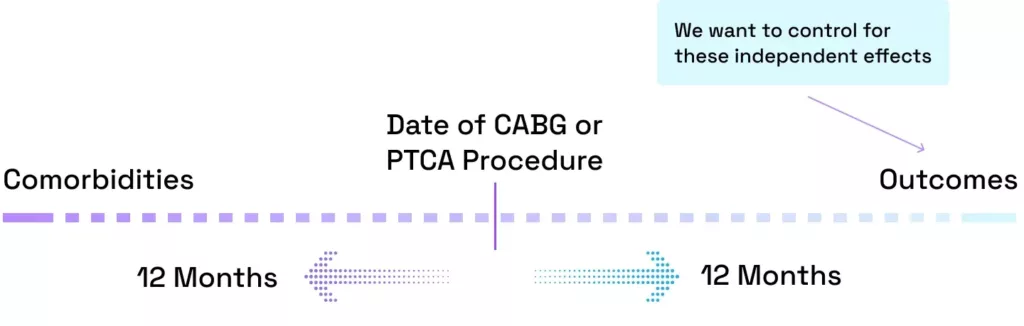

Thanks to techniques powered by a modern data stack, Hakkoda is able to help their healthcare clients track the health of patients before and after a CABG or PTCA procedure and find the direct effect of these procedures on the probability of an individual having a heart attack. The Centers for Medicare & Medicaid Services (CMS) provides a dataset tracking individuals via medical claims from 2008 to 2010 (SynPUFs). We looked at all individuals in 2008 who undertook a CABG or PTCA procedure and tracked other health events 12 months prior to the procedure and labeled these comorbidities and 12 months after and labeled these outcomes, a visual representation of this can be seen below.

For this analysis, we only looked at outcomes for patients as a way to control for confounding factors that might raise or lower the likelihood of heart attack. By controlling for these factors along with race and gender, this analysis will reveal if in general, CABG or PTCA procedures are having a positive impact on the likelihood of heart attack in a patient. The results can be seen below.

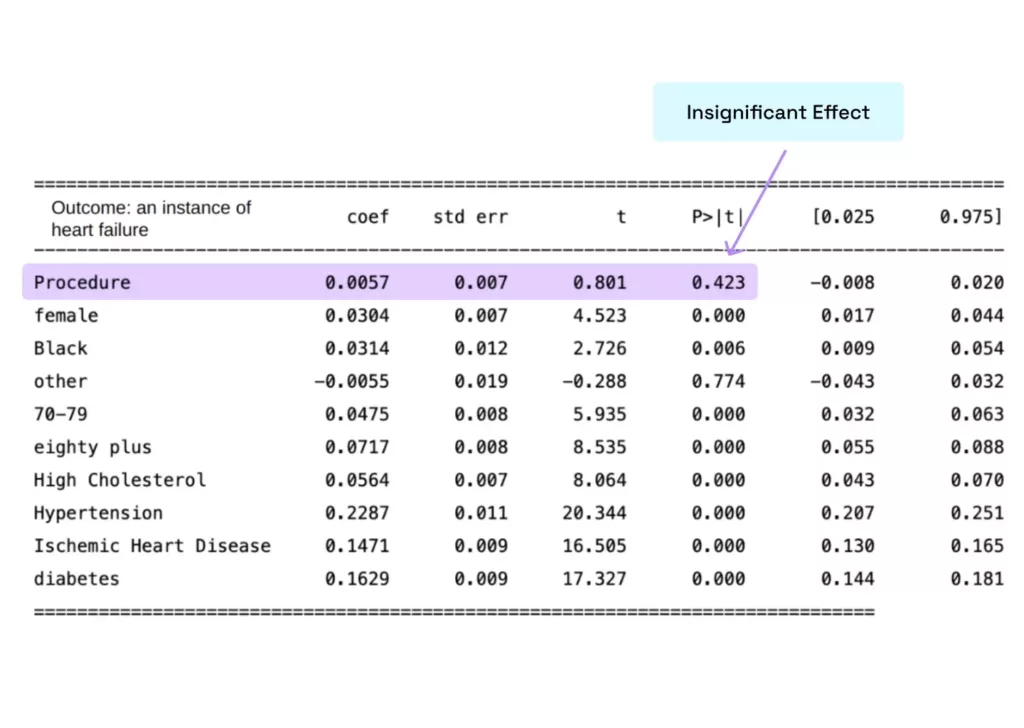

The results of this analysis are surprising in that they suggest that getting the procedure in question has a very insignificant effect on the likelihood of having a heart attack in the 12 month period after said procedure. Less surprisingly, avoiding conditions like high cholesterol, hypertension, ischemic heart disease and diabetes and being older all lower the likelihood of heart attack.

The implications of this analysis may be that in most cases, incentivizing patients to take preventative measures and maintain a high level of health might be more effective at preventing heart attacks than risky and costly procedures. Additionally, it confirms that procedures like PTCA or CABG carry great risk in the short term and can markedly lower the quality of life of patients in the recovery period. While many patients may struggle to change their lifestyle or habits, placing a higher priority on preventative care and not overprescribing surgical procedures will likely save patients money and lengthen their lifespans without compromising quality of life.

Innovating Claims Data

This style of claims data analysis would not be possible without advances in cloud computing and the integration of data science development tools within Snowflake via Snowpark.

At Hakkoda, the healthcare practice is capable of taking claims data, moving it to a data platform like Snowflake and enabling deep, meaningful analysis. In the future, we will continue to build models which help healthcare providers find operational efficiencies, make better decisions with patients, and improve outcomes.

Our highly-trained team of experts at Hakkoda use the latest functionalities and tools to build data solutions that help hospitals and patients. We leverage data engineering, data science, governance, and privacy expertise alongside full-stack application development. To start your data innovation journey with state-of-the-art solutions, contact us today.